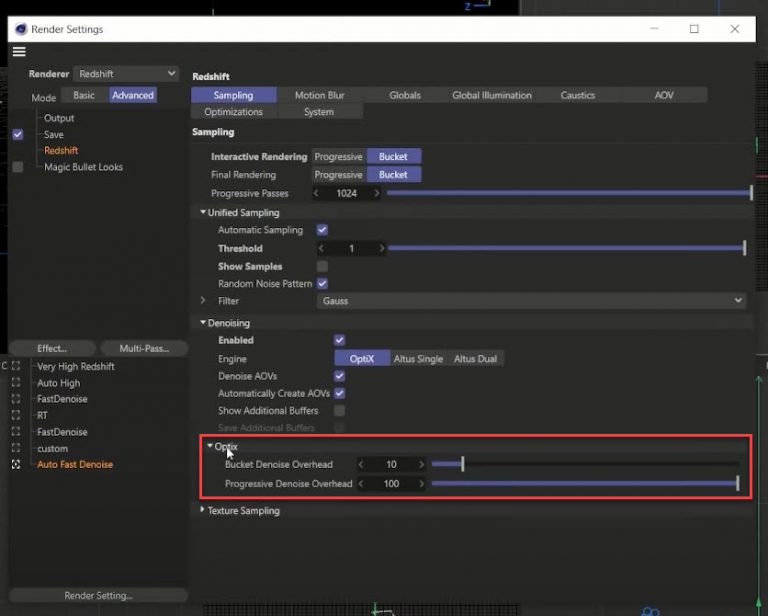

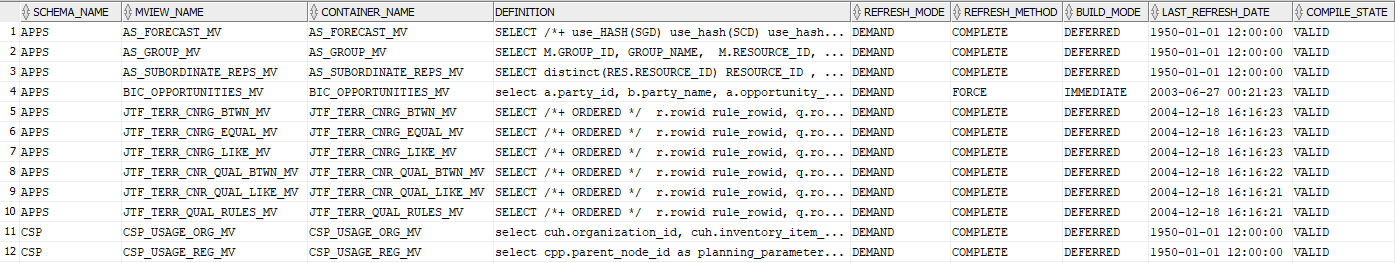

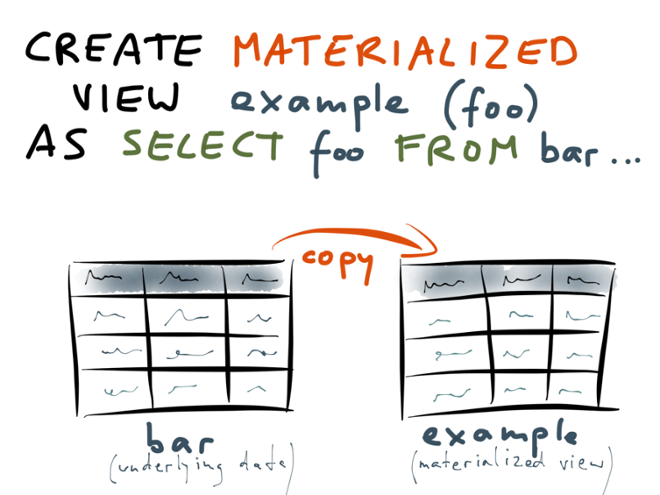

Run your queries against your streaming data, such as the following: SELECT * FROM mv_orders Using Upsolver to write event data to the data lake and query it with Amazon Redshift Serverless Instruct Amazon Redshift to materialize the results to a table called mv_orders. Json_extract_path_text(from_varbyte( Data, 'utf-8'), ' shipmentStatus') as shipping_statusģ. Json_extract_path_text(from_varbyte( Data, 'utf-8'), 'orderId') as order_id, CREATE MATERIALIZED VIEW mv_orders AS SELECT ApproximateArrivalTimestamp, SequenceNumber, Create a materialized view that allows you to execute a SELECT statement against the event data that Upsolver produces. This command requires you to include the IAM role you created by following the documentation. Create an external schema that is backed by Kinesis Data Streams. Then use the Redshift query editor, AWS Command LIne Interface (AWS CLI), or API to run the following SQL statements.ġ. For Bucket, you can use the bucket with the public dataset (as shown in the below screenshot) or enter a bucket name with your own data.Ĭreate an Amazon Redshift external schema and materialized viewįirst, create an IAM role with the appropriate permissions as described in the Amazon Redshift documentation on streaming ingestion.Then select Amazon S3 as your data source. On the Upsolver console, select Data Sources, and in the top-right corner of the screen click New. Upsolver ingests this data as a stream as new objects arrive they are automatically ingested and streamed to the destination.ġ. Create an Amazon Redshift external schema and materialized view.įor the purpose of this post you’ll create an Amazon S3 data source that contains sample retail data in JSON format.Configure the source Kinesis data stream.To implement this solution, complete the following high-level steps: The following diagram represents the architecture to write events to KDS and Redshift: Configuring Upsolver to stream events to Kinesis Data Streams that are consumed by Redshift using Streaming Ingestion

If you haven’t yet installed Upsolver, find it in the AWS Marketplace where you can deploy it directly into your VPC to securely access Kinesis Data Streams and Amazon Redshift. īefore you get started, you must have Upsolver installed. If you have any questions about using Streaming Ingestion with Upsolver, and/or anything related to building modern data pipelines, start the conversation in our Upsolver Community Slack channel.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed